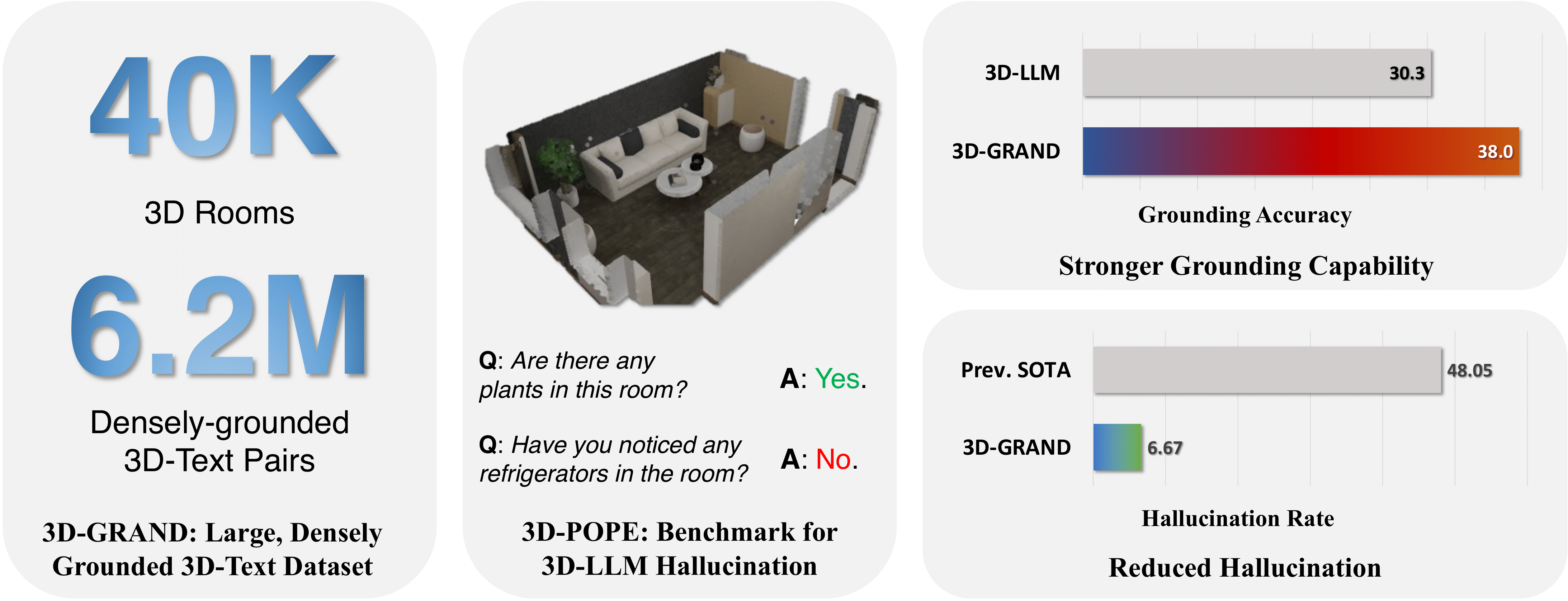

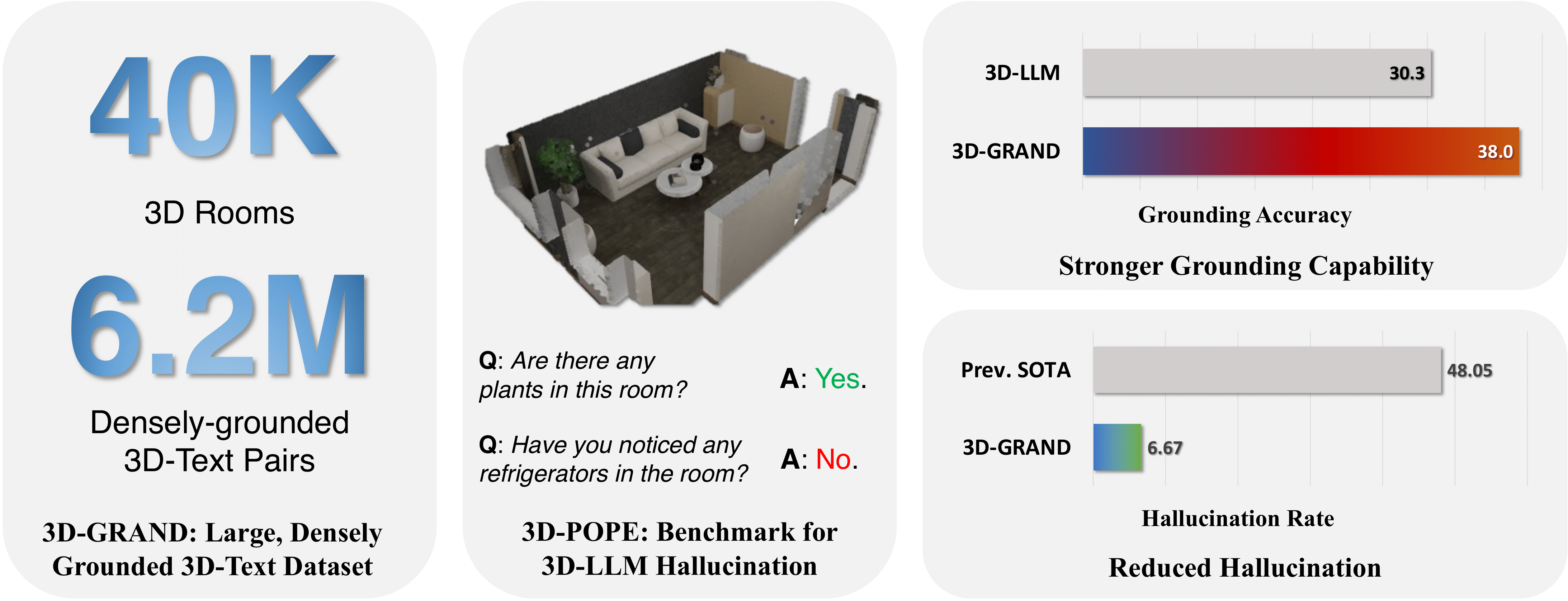

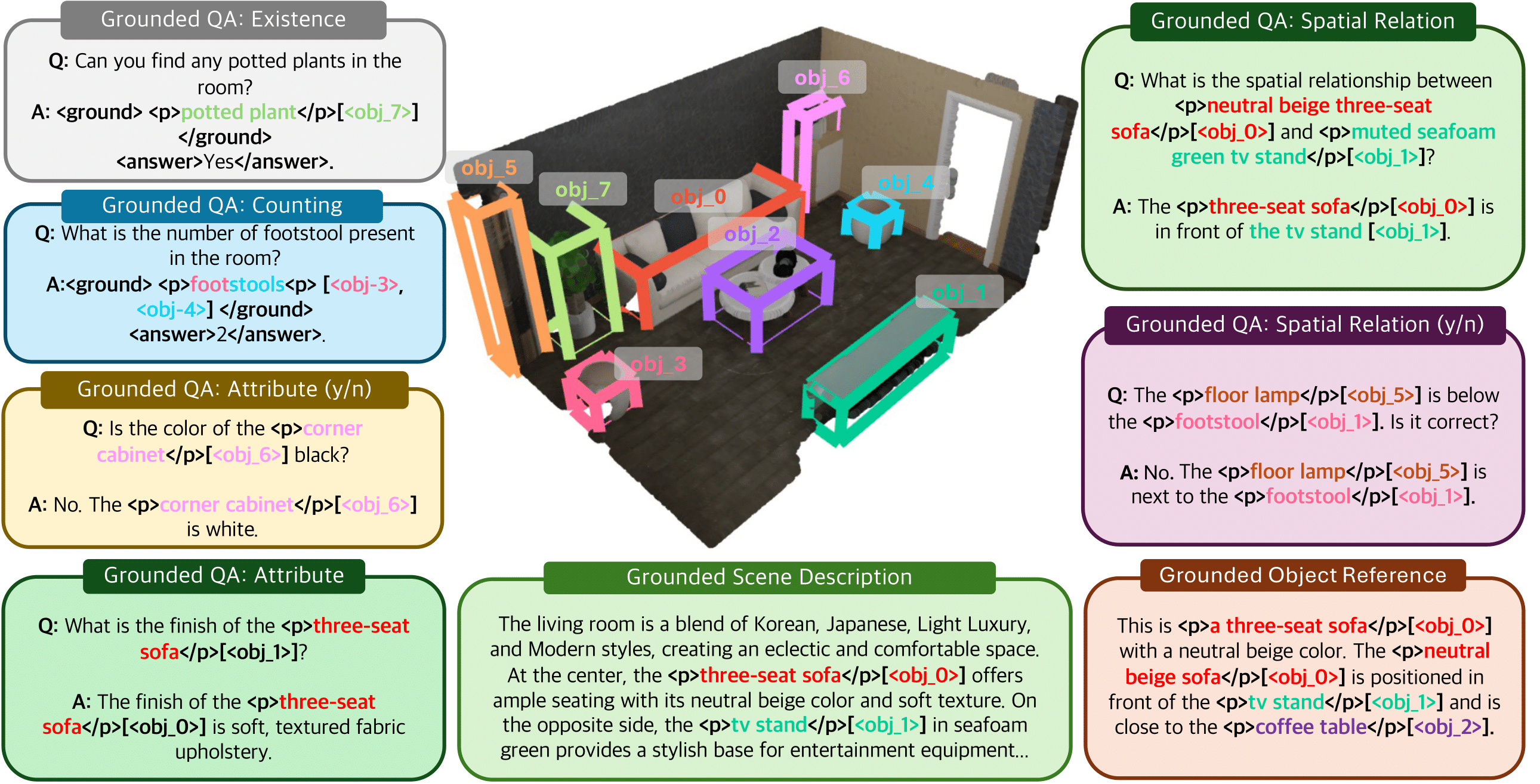

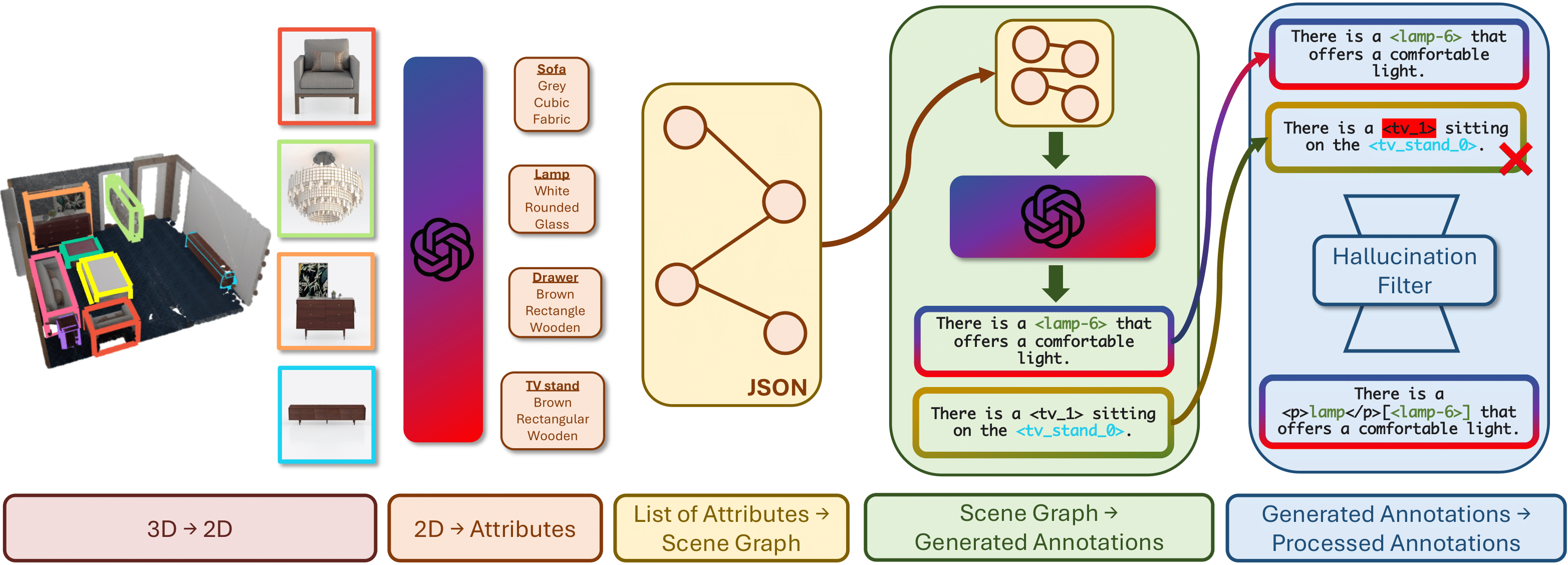

The integration of language and 3D perception is crucial for developing embodied agents and robots that comprehend and interact with the physical world. While large language models (LLMs) have demonstrated impressive language understanding and generation capabilities, their adaptation to 3D environments (3D-LLMs) remains in its early stages. A primary challenge is the absence of large-scale datasets that provide dense grounding between language and 3D scenes. In this paper, we introduce 3D-GRAND, a pioneering large-scale dataset comprising 40,087 household scenes paired with 6.2 million densely-grounded scene-language instructions. Our results show that instruction tuning with 3D-GRAND significantly reduces hallucinations and enhances the grounding capabilities of 3D-LLMs compared to models trained without dense grounding. As part of our contributions, we propose a comprehensive benchmark 3D-POPE to systematically evaluate hallucination in 3D-LLMs, enabling fair comparisons among future models. Our experiments underscore a scaling effect between dataset size and 3D-LLM performance, emphasizing the critical role of large-scale 3D-text datasets in advancing embodied AI research. Through 3D-GRAND and 3D-POPE, we aim to equip the embodied AI community with essential resources and insights, setting the stage for more reliable and better-grounded 3D-LLMs.

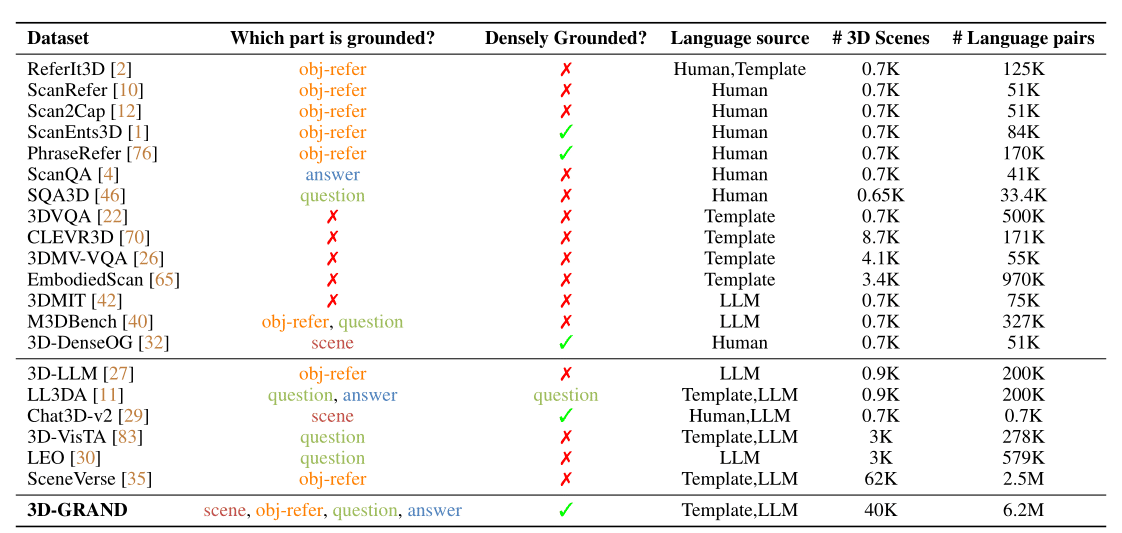

| 3D-POPE | Model | Precision | Recall | F1 Score | Accuracy | Yes (%) |

|---|---|---|---|---|---|---|

| Random | Random Baseline | 50.00 | 50.00 | 50.00 | 50.00 | 50.00 |

| 3D-LLM | 50.03 | 99.88 | 66.67 | 50.07 | 99.81 | |

| 3D-VisTA | 50.12 | 53.58 | 51.79 | 49.66 | 53.95 | |

| LEO | 51.95 | 77.65 | 62.25 | 52.91 | 74.73 | |

| Ours zero-shot (Grounding) | 93.34 | 84.25 | 88.56 | 89.12 | 45.13 | |

| Popular | Random Baseline | 50.00 | 50.00 | 50.00 | 50.00 | 50.00 |

| 3D-LLM | 49.97 | 99.88 | 66.61 | 49.94 | 99.94 | |

| 3D-VisTA | 47.40 | 51.88 | 49.54 | 49.49 | 52.30 | |

| LEO | 48.30 | 77.65 | 59.55 | 47.27 | 80.38 | |

| Ours zero-shot (Grounding) | 73.05 | 84.28 | 78.26 | 76.59 | 57.69 | |

| Adversarial | Random Baseline | 50.00 | 50.00 | 50.00 | 50.00 | 50.00 |

| 3D-LLM | 49.97 | 99.88 | 66.61 | 49.94 | 99.94 | |

| 3D-VisTA | 48.28 | 54.39 | 51.15 | 51.14 | 52.99 | |

| LEO | 48.47 | 77.98 | 59.78 | 47.52 | 80.45 | |

| Ours zero-shot (Grounding) | 69.86 | 84.21 | 76.37 | 73.95 | 60.26 |

@misc{yang2024_3D_GRAND,

title={3D-GRAND: A Million-Scale Dataset for 3D-LLMs with Better Grounding and Less Hallucination},

author={Jianing Yang and Xuweiyi Chen and Nikhil Madaan and Madhavan Iyengar and Shengyi Qian and David F. Fouhey and Joyce Chai},

year={2024},

eprint={2406.05132},

archivePrefix={arXiv},

primaryClass={cs.CV}

}